Introduction to Container Network Interface CNI

This guide explores the Container Network Interface (CNI), its architecture, specification, features, and popular plugins for Kubernetes networking.

As your Kubernetes cluster grows and hosts more workloads, networking complexity can quickly become a bottleneck. The Kubernetes networking model requires every pod to communicate seamlessly across nodes, demanding consistent, automated configuration management. In this guide, we’ll explore the Container Network Interface (CNI)—its purpose, architecture, specification, and key features—before surveying the most popular CNI plugins in today’s ecosystem.

What Is CNI?¶

The Container Network Interface is a CNCF project defining a standard for configuring network interfaces in Linux and Windows containers. It provides:

- A specification for network configuration files (JSON).

- Libraries for writing networking plugins.

- A protocol that container runtimes (e.g., containerd, CRI-O) use to invoke plugins.

When a container is created or deleted, CNI allocates or cleans up network resources, delivering a unified interface for orchestrators like Kubernetes.

How CNI Works¶

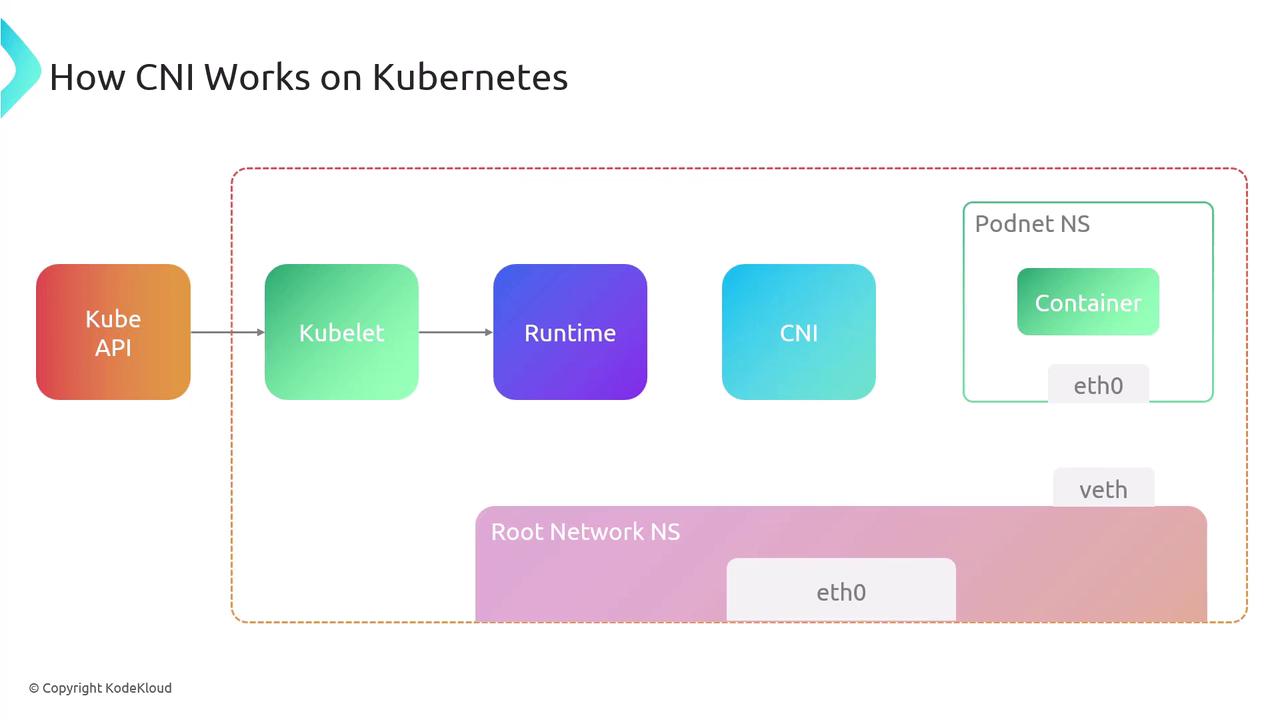

Under the hood, the container runtime handles network setup by invoking one or more CNI plugin binaries. Here’s the typical workflow in Kubernetes:

- API Server → Kubelet: Request to create a Pod.

- Kubelet → Runtime: Allocate a new network namespace for the pod.

- Runtime → CNI Plugin: Invoke plugin(s) with JSON config via stdin.

- Plugin(s) → Runtime: Return interface details on stdout.

- Runtime → Container: Launch container in the prepared namespace.

/opt/cni/bin). Without them, pods may fail to start.

CNI Specification Overview¶

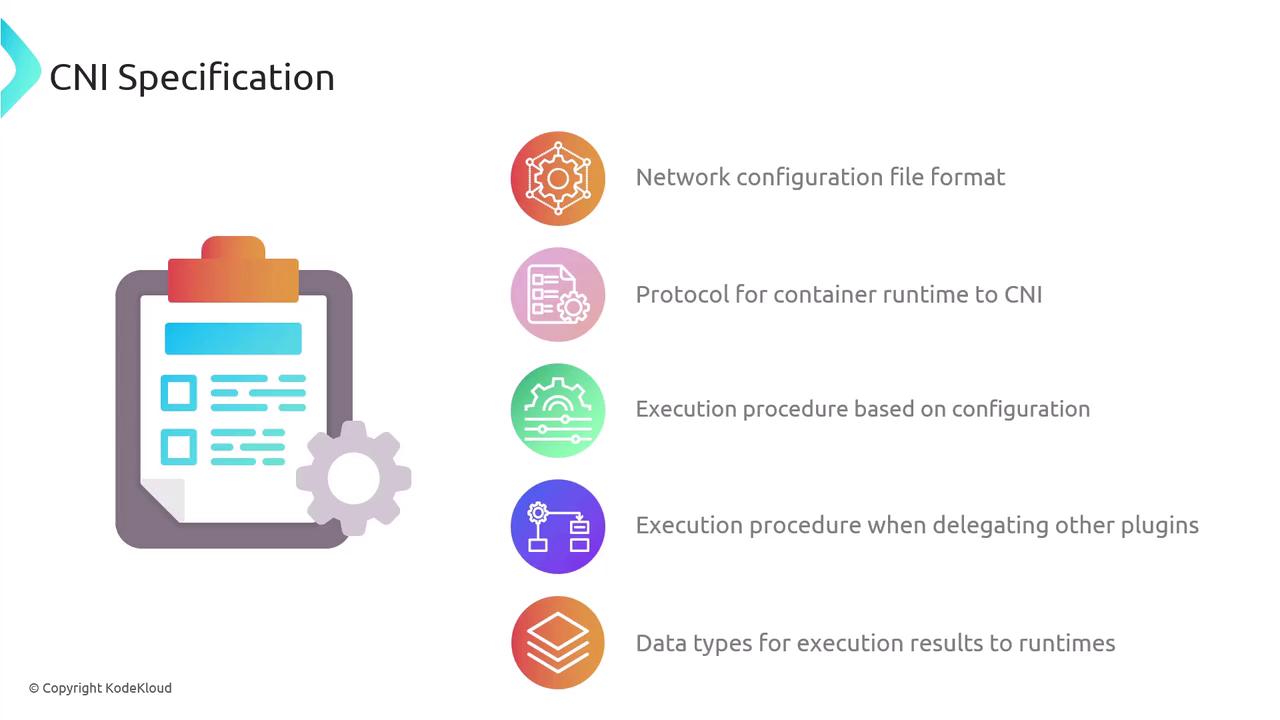

The CNI spec comprises:

- A JSON schema for network configuration.

- A naming convention for network definitions and plugin lists.

- An execution protocol using environment variables.

- A mechanism for chaining multiple plugins.

- Standard data types for operation results.

Network Configuration Files¶

Configuration lives in a JSON file interpreted by the runtime at execution time. You can chain multiple plugins:

{

"cniVersion": "1.1.0",

"name": "dbnet",

"plugins": [

{

"type": "bridge",

"bridge": "cni0",

"keyA": ["some", "plugin", "configuration"],

"ipam": {

"type": "host-local",

"subnet": "10.1.0.0/16",

"gateway": "10.1.0.1",

"routes": [{"dst": "0.0.0.0/0"}]

}

},

{

"dns": {"nameservers": ["10.1.0.1"]}

},

{

"type": "tuning",

"capabilities": {"mac": true},

"sysctl": {"net.core.somaxconn": "500"}

},

{

"type": "portmap",

"capabilities": {"portMappings": true}

}

]

}

Each object in plugins is invoked in sequence for setup or teardown.

Plugin Execution Protocol¶

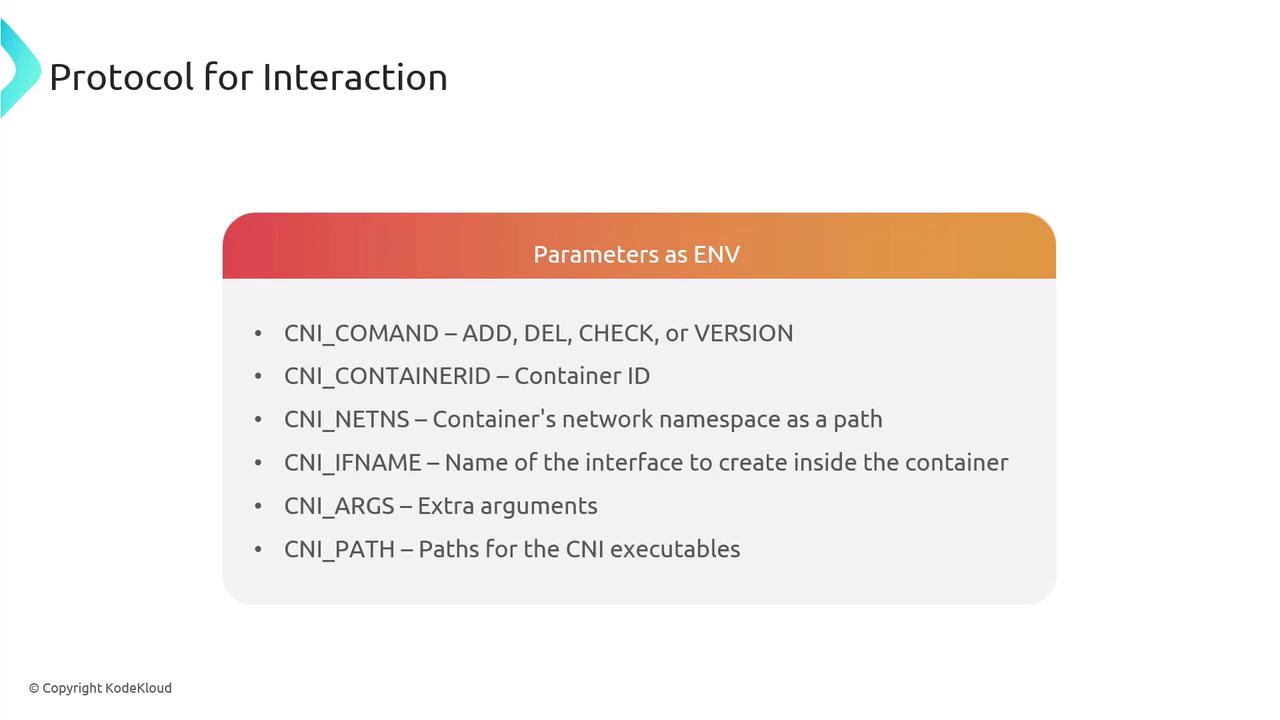

CNI relies on environment variables to pass context:

| Variable | Description |

|---|---|

| CNI_COMMAND | Operation (ADD, DEL, CHECK, VERSION) |

| CNI_CONTAINERID | Unique container identifier |

| CNI_NETNS | Path to container’s network namespace |

| CNI_IFNAME | Interface name inside the container |

| CNI_ARGS | Additional plugin-specific arguments |

| CNI_PATH | Paths to locate CNI plugin binaries |

Core Operations¶

- ADD: Attach and configure an interface.

- DEL: Detach and cleanup.

- CHECK: Validate current network state.

- VERSION: Query supported CNI versions.

A network attachment is uniquely identified by CNI_CONTAINERID + CNI_IFNAME. Plugins read JSON config from stdin and write results to stdout.

Execution Flow¶

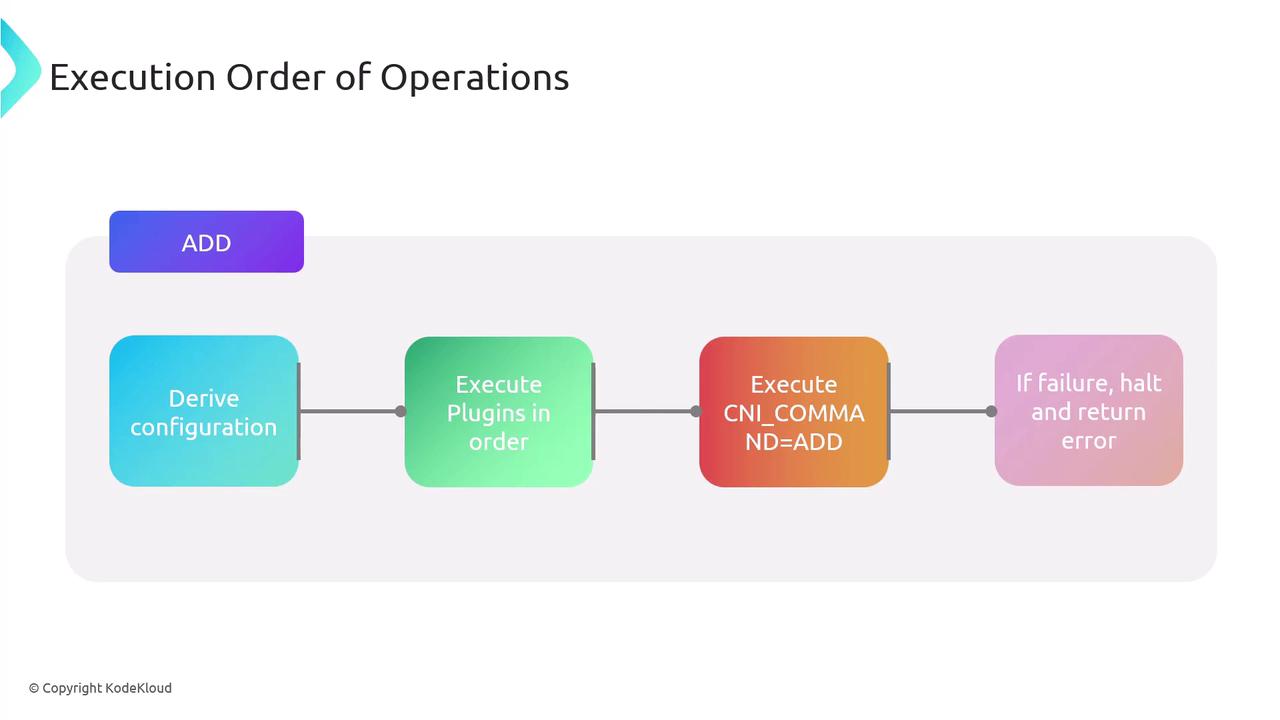

When running ADD:

- Derive the network configuration.

- Execute plugin binaries in listed order with

CNI_COMMAND=ADD. - Halt on any failure and return an error.

- Persist success data for later

CHECKorDEL.

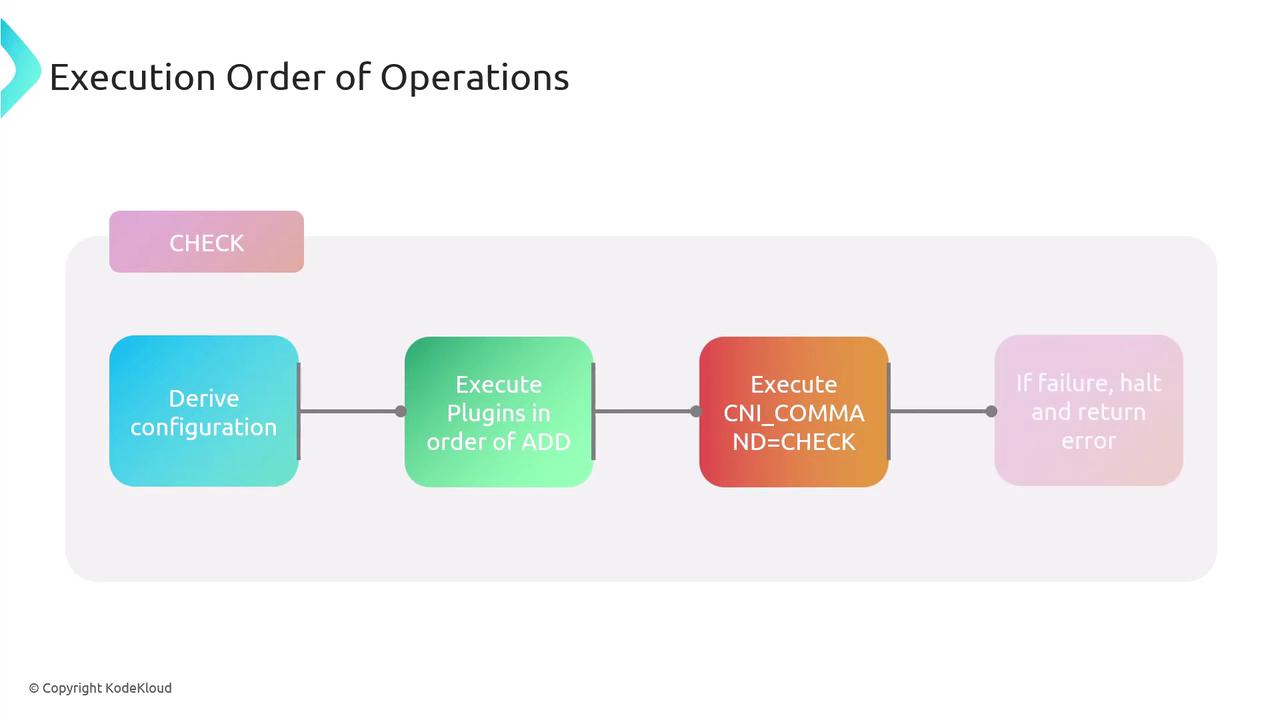

The DEL operation runs plugins in reverse order. CHECK follows the same sequence as ADD but performs validations only.

Chaining and Delegation¶

CNI supports chaining multiple plugins. A parent plugin can delegate tasks to child plugins. On failure, the parent invokes a DEL on all delegates before returning an error, ensuring cleanup.

Result Types¶

CNI operations return standardized JSON for:

- Success: Contains

cniVersion, configured interfaces, IPs, routes, DNS. - Error: Includes

code,msg,details,cniVersion. - Version: Lists supported spec versions.

Example error response:

{

"cniVersion": "1.1.0",

"code": 7,

"msg": "Invalid Configuration",

"details": "Network 192.168.0.0/31 too small to allocate from."

}

Key Features of CNI¶

- Standardized Interface: Unified API for all container runtimes.

- Flexibility: Supports a vast ecosystem of plugins.

- Dynamic Configuration: Runtime-driven setup and teardown.

- Ease of Integration: Embeds directly into container runtimes.

- Compatibility: Versioned specs for interoperability.

Popular CNI Plugins¶

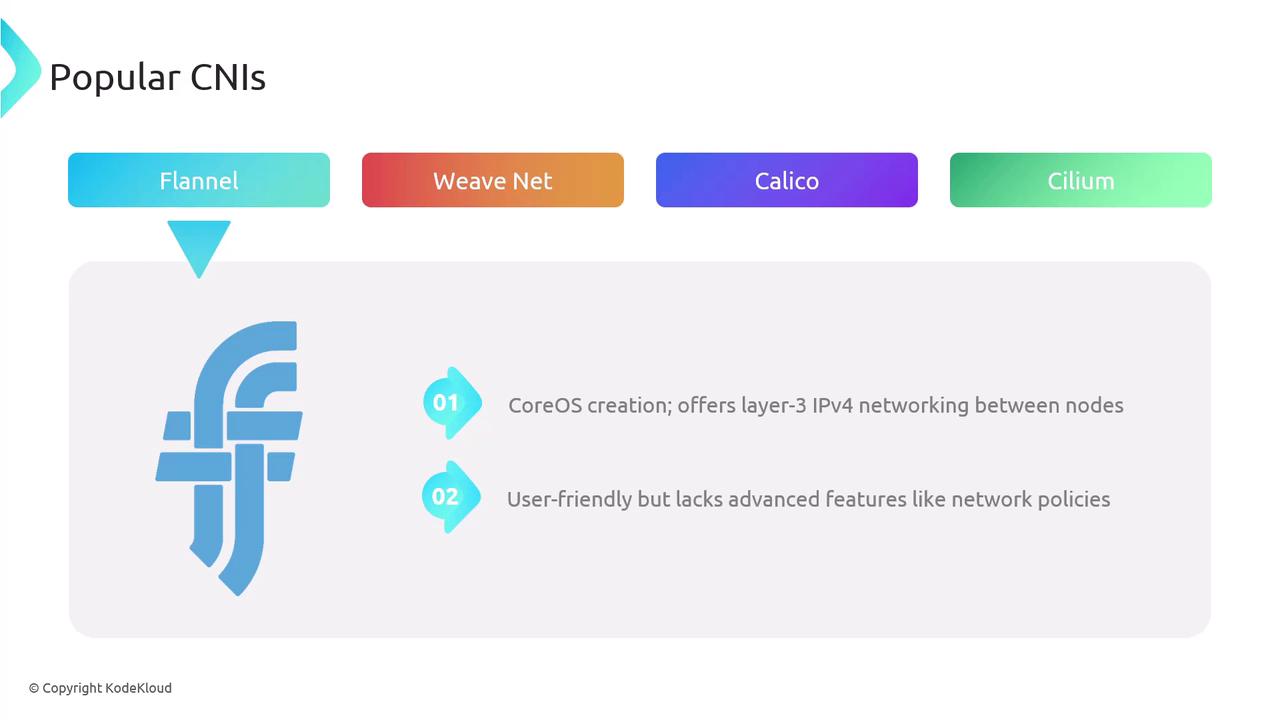

Flannel – A CoreOS project providing simple IPv4 layer-3 networking.\ Weave Net – Weaveworks’ layer-2 overlay with built-in encryption and network policies.

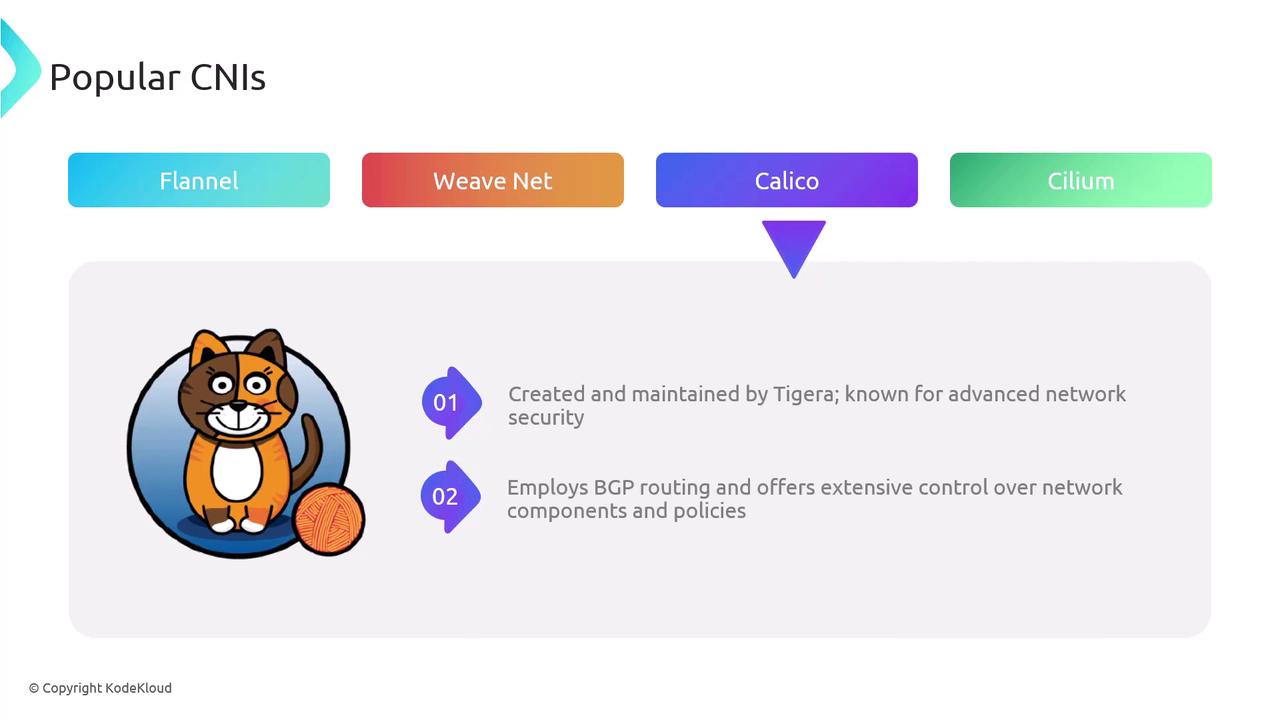

Calico – Tigera’s solution featuring scalable BGP routing and robust network policies.

Cilium – Leverages eBPF for deep network and security visibility, plus inter-cluster service mesh capabilities.

Many cloud providers (AWS, Azure, GCP) also offer CNI implementations optimized for their platforms. - Isovalent's cration, robust networking plugin using eBPF for enhanced security and control - Supports inter-cluster service mesh

Comparison of Popular CNIs¶

| Plugin | Type | Key Features |

|---|---|---|

| Flannel | L3 Overlay | Simple IPv4 overlay, minimal policy |

| Weave Net | L2 Overlay | Encryption, built-in network policies |

| Calico | BGP Routing | Scalable, advanced security policies |

| Cilium | eBPF-Powered | Fine-grained policies, service mesh |

Conclusion¶

CNI delivers a standardized, extensible framework that streamlines Kubernetes networking. By understanding its specification, execution model, and popular plugins, cluster operators can design robust, flexible network architectures.